Everyone talks about the Cloud nowadays. But talking about it isn’t the same as doing it right.

For many years Informatics Matters has been embracing cloud technology and can bring that knowledge into play for your projects. Whilst most organisations are now aware of Docker and maybe other containerisation technologies, and may even have started using these, Informatics Matters has been doing this for several years and are already ahead of the game on the sorts of issues you are likely to hit.

Containerisation is widely recognised as a useful technology but in itself, it is not sufficient to address the complete needs of the software development lifecycle. Container orchestration, security and other matters also need to be handled. You also need to consider how to build and deploy your containerised apps. And how to scale them when load increased, and how to keep them running when you encounter software or hardware failures. And of course, all of this needs be done securely.

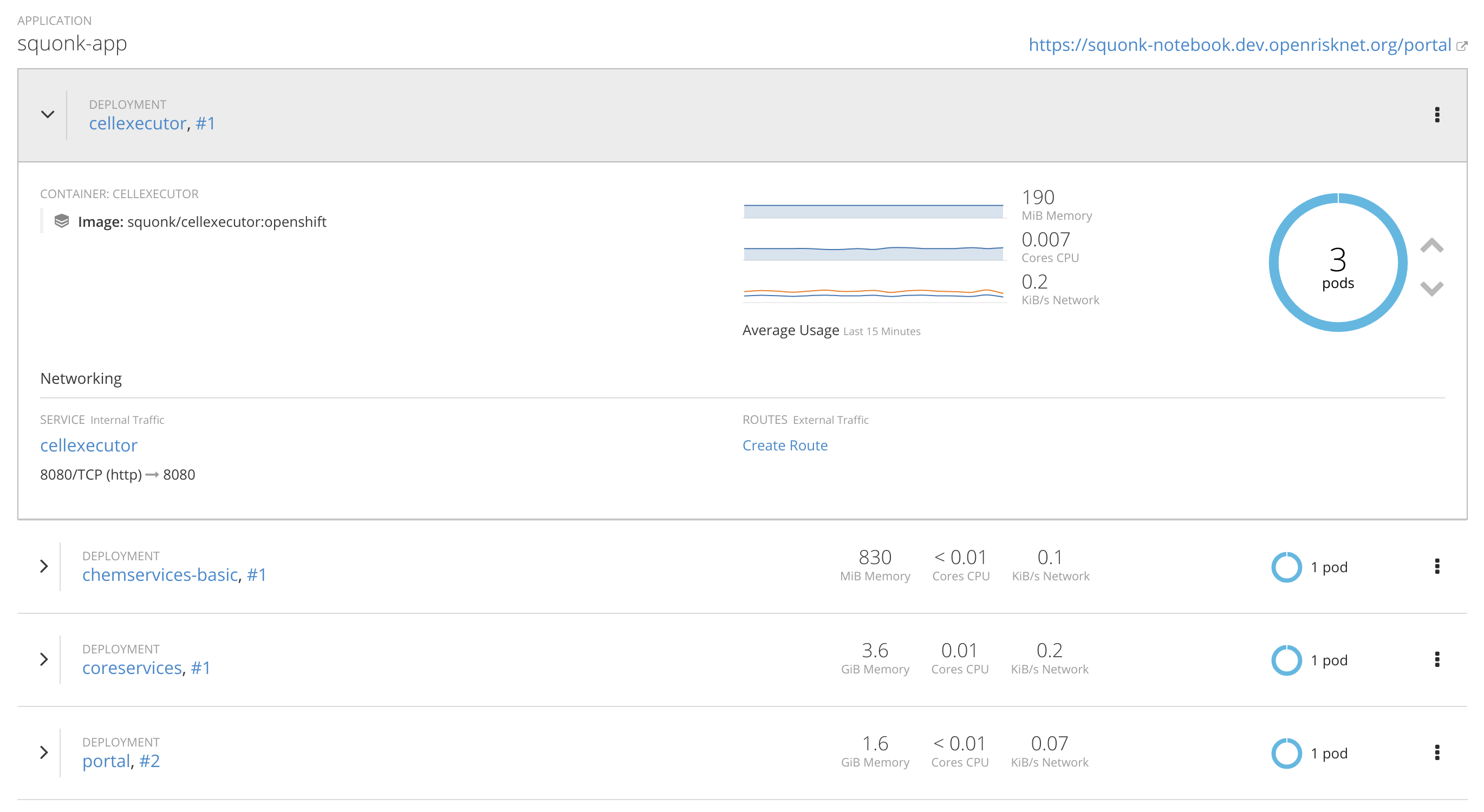

To handle this Informatics Matters has been using Google’s Kubernetes, and in particular, Red Hat’s OpenShift which provides a comprehensive and robust way to handle container orchestration. We can help you get up to speed with these technologies and/or can create and manage environments for you.

As an example, Informatics Matters is a partner in the OpenRiskNet Horizon 2020 e-infrastructure project for chemical risk assessment. We are taking a lead role in generating the computational infrastructure and provides the basis for the Squonk platform. To find out more you can read the project “D3.2 First documentation of the core e-infrastructure” report (ref) that describes the environment based on Openshift running on the OpenStack based Swedish Science Cloud.

Considerations

Here a a few examples of the things that need to be handled that we can help you with.

Building Docker Images

Docker images are created from a definition found in a Dockerfile. The concept of a Dockerfile is quite simple and it’s not too difficult to get one running. However, that doesn’t mean that its easy to write a good Dockerfile. A number of aspects need to be carefully considered:

Image size

One of the key advantages of using Docker containers is that they are small and lightweight. This is important as they frequently have to be downloaded and stored and an image with unnecessary contents not only makes it less efficient but also increases the attack surface and so makes your images less secure. It’s all too easy to generate a Docker image that becomes quite large in size and ends up containing lots of stuff that’s not actually needed. Keeping your image lean is quite an art.

Image security

A key concern of using Docker is the need for a Docker daemon that must run as the root user. This introduces potential security risks, especially if the Docker container is running as the root user, as many do as this is the default option. A well crafted Docker image will typically not run as the root user, and better still it will be designed to run as an arbitrarily assigned user ID as is the preferred situation when using OpenShift. Achieving this can require careful consideration. Again, our informaticsmatters/tomcat images provide a good example of how to achieve this.

Image maintenance

The job is not over once your Docker image is created. You need to keep it up to date. What if there is a critical bug like Heartbleed in the underlying operating system? All containers based on your image will contain that bug. You must keep your images up to date so that important updates and fixes are applied. To do this effectively the creation of your images must be fully automated. We can help you with doing this, and processes for doing so are built into our OpenShift based platform.

A simple example of some of these aspects of Docker images can be found in our informaticsmatters/tomcat image, and a more complex example in our family of RDKit images. The full range of Docker images that Informatics Matters has created can be found on Docker Hub.

For more on this topic take a look at the Smaller containers series on our our blog.

Which Cloud for You?

There are multiple clouds. Which one is right for you? Amazon Web Services are the clear market leader and provide a very comprehensive set of services. But it is relatively high cost, and do you want to be locked in to using their services for the long term? Alternatively there are other feature rich cloud providers such as Microsoft’s Azure, Google’s Cloud Platform and Digital Ocean as well as lower cost providers such as Scaleway that provide a more bare bones service but at 5-10x lower cost. Or you might need to run on your own in-house hardware, maybe using the OpenStack cloud platform.

Not only can we help you decide on the best cloud for you, but we can help you design your software architecture so that you are largely isolated from being locked into any particular choice for the long term. Our platform based on OpenShift is designed to be deployed to all of these cloud and in-house environments.

Monitoring

As your infrastructure expands and becomes more virtualised it becomes increasingly difficult to monitor. Knowing what is going on in your applications is critical to so many aspects, but this information will end up being buried in logs inside your containers spread across multiple servers. You will not be aware of what errors are occurring in your applications, and unable to find out how your users are using your applications.

Do you know how effectively your computing infrastructure is being used? Is it close to breaking point, or are you overpaying for resources you are not using?

Our OpenShift environments have consolidated logging and metrics deployed as standard allowing to easily address these types of questions.

Scalability and Resilience

Things go wrong. Applications crash. Hardware fails. You can, and will, do your best to avoid this, but however hard you try you will never be entirely successful. Things will still go wrong for one reason or another. Ask British Airways or Git Lab and many others.

With modern environments like Kubernetes and OpenShift, the approach to addressing this is subtly changing. Instead of just doing all you can to prevent failures, these systems actually assume things will fail and do their best to handle this. If a software application fails it is automatically replaced with a new version. If hardware fails software that was running on it is re-started on other hardware and replacement hardware will be started. Redundancy is built into the system.

Software applications are designed to automatically scale according to demand. You may not hit a “Super Bowl moment” but knowing that your application will elastically meet any increased demand, and then scale back when that demand reduces, knowing that this will all happen automatically will give you a greater degree of comfort.

Can your business afford not to stay on top of these issues?

Security and Single Sign On

Building systems with open source tools has many benefits, but the systems you build must be robust and secure. This is a key part of our design philosophy. A key part of this is how you handle user authentication and authorisation. How many times have you as a user been frustrated as a user by having to handle multiple usernames and passwords? Single Sign-On (SSO) using Keycloak (Red Hat’s SSO solution) is built into our infrastructures so that once you login once you are logged in to all applications. The login process can be delegated to your organisation’s LDAP or Active Directory servers. Handling users is now manage by robust commercial grade software, not something you need to create and manage yourself.

Upgrading Applications

We believe that the process of upgrading applications should be built into your development practices. We can provide mechanisms for automated rolling upgrades of your applications. More can be found on the Automation and deployment page.

Backup and Disaster Recovery

How do you reduce the occurrence of software and hardware failures? More importantly, how do you cope when such failures inevitably happen?

Backup and disaster recovery procedures are a critical part of your business. Let us help you with this, or manage this for you.